Problem

What problem is the pattern looking to solve

How do we store data centrally, in a way that provides context and absorbs changes, while also making it quickly available for use with minimal upfront work.

Solution

A generalised design which can be applied to solve the problem given the context

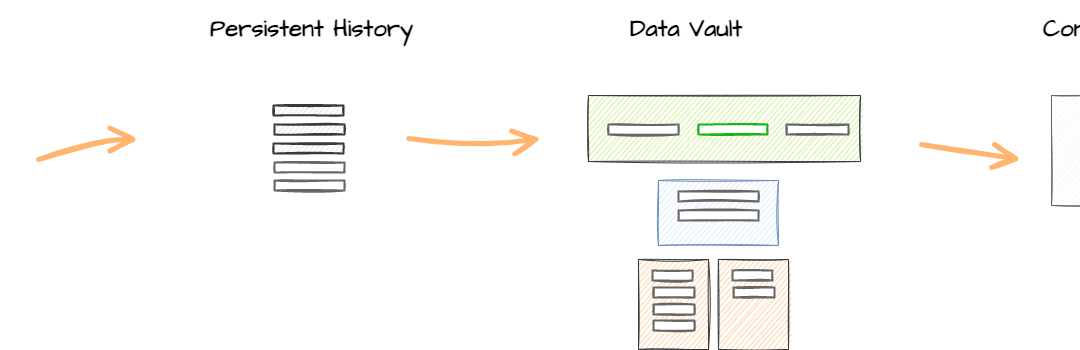

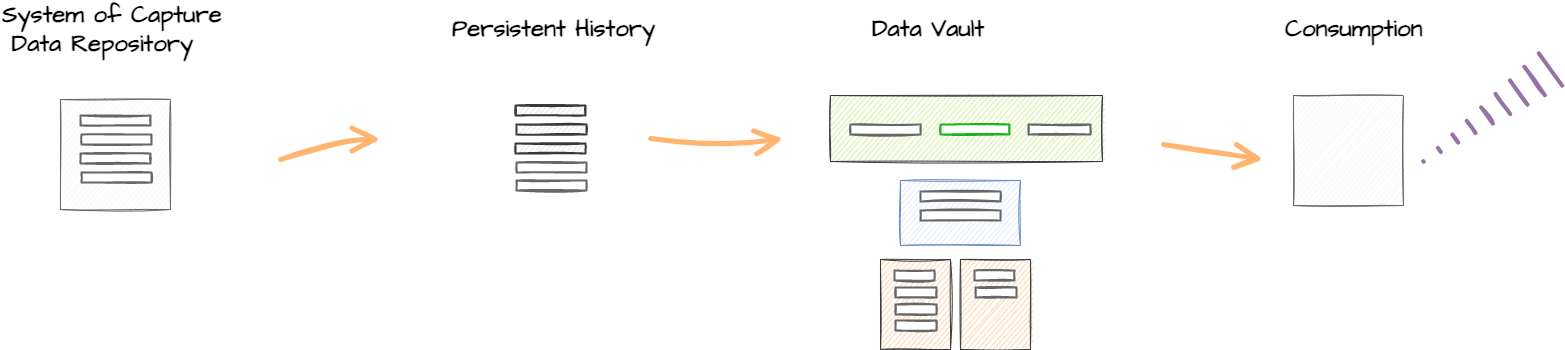

Source data is collected and stored in a Persistent History layer leveraging a Change Data pattern.

The Data Vault model is iteratively designed and implemented overtime.

The Data Vault layer is loaded from the Persistent History layer rather than directly from the System of Capture

Context

A disucssion on when this pattern could be applied

This pattern stores change records for all data from the System of Capture, without requiring effort to model this data upfront to match an organisations core business concepts and process.

The organisations core business concepts and processes are then modeled as required using Hubs, Sats and Links and the Persistent History data loaded into these objects.

The Data Vault layer is primarily focussed on solving Data Integration problems.

The Persisted History layer can be based on a number of patterns, including a Source Raw Data Vault.

Impact

The likely consequences of adopting this pattern

FOR

Requires less modeling up front

Once you have identified the Change Data driver/key for each table the data can be quickly loaded into the Persistent History layer. This results in the raw data potentially become available for use quicker, rather than having to wait until the Data Vault models are defined and implemented.

Model at any time

Because change data is being stored in a persistant format in the Persistant History layer, you can model and load the Data Vault Hubs, Sats and Links in the future and these will automatically inherit the historical records on the first load.

Only model data where it adds value

You only have to model the data which has value being modelled, rather than having to model all data into the Raw Data Vault.

Model data in the order it provides value

You can prioritise the incremental modeling and loading of the Data Vault Hubs, Sats and Links in line with the identified business value of the transformed data.

AGAINST

Increased data duplication

Some data is stored in both the Persistent History and Data Vault layers, increasing data duplication compared to other patterns.

Increased data complexity

While data is made available in the Persisitent History quicker than some other Data Architecure patterns, the structure of this data matches the structure of the source system and will typically be more complex to use compared to data that has been modeled with context already applied.

Increased modeling complexity

Multiple modeling techniques are being utilised, which increases the complexity of the data platform.

Increased data latency

The Persistent History introduces a new data layer compared to some of the other Data Architecture patterns. This introduces an additonal level of latency when moving data from source applications through to the consumable layers.

Related Patterns

Other patterns that are similair to this pattern or reliant upon it